- Blog

- Nisha sarang salary

- Hp wifi driver windows 7

- Online word to pdf converter fast

- Hello neighbor online free cool games

- How to uninstall office 365 on mac

- Lexmark x422 camera driver indir

- Respondus lockdown browser free download uta

- Mac address learning cisco

- Microsoft office excel 2013 images

- How to add crop marks in word 2013

- Find word in file unix command

- Developer tab excel for mac 2016

- How to insert a citation in powerpoint 2013

- Ios data recovery for mac

- Directory list and print patch

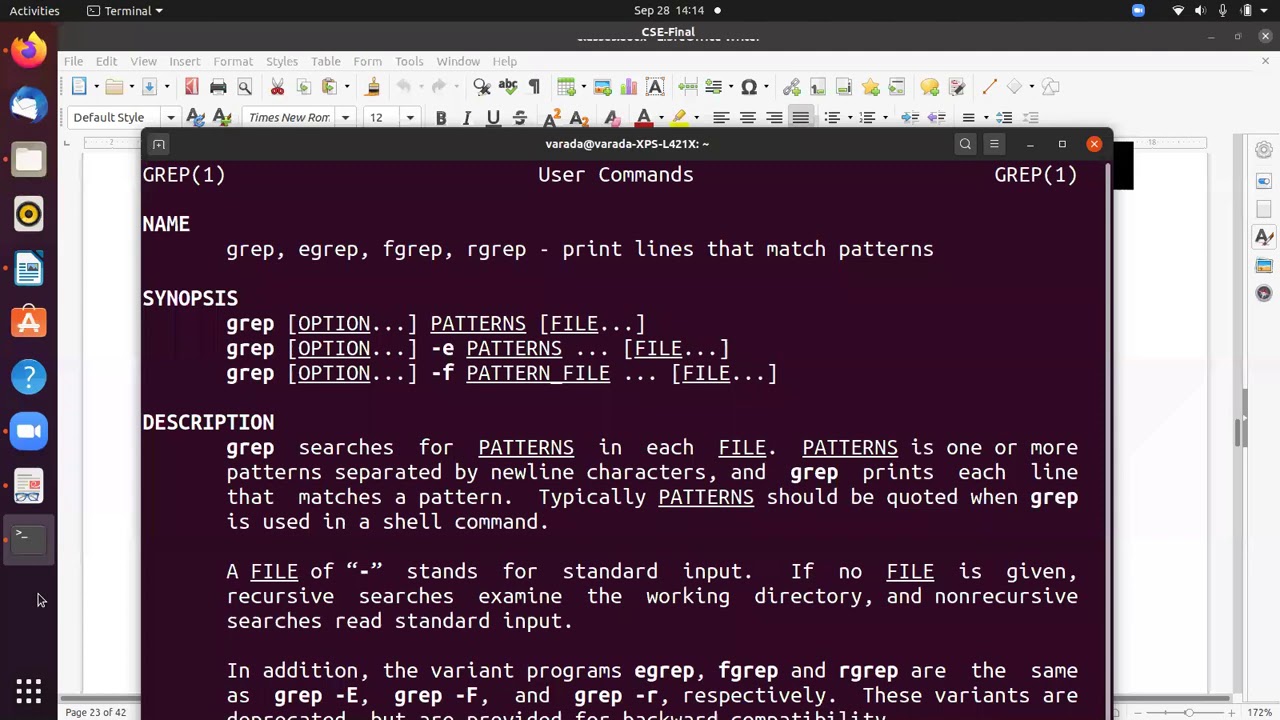

Here’s an example where we search a text document for a string. While you can use grep to search the output piped from other command-line tools, you can also use it to search documents directly. Grep will accept both single quotes and double quotes, so wrap your string of text with either. For example, what if we needed to search for the “My Documents” directory instead of the single-worded “Documents” directory? $ ls | grep 'My Documents' If you need to search for a string of text, rather than just a single word, you will need to wrap the string in quotes. So if grep returns nothing, that means that it couldn’t find the word you are searching for.

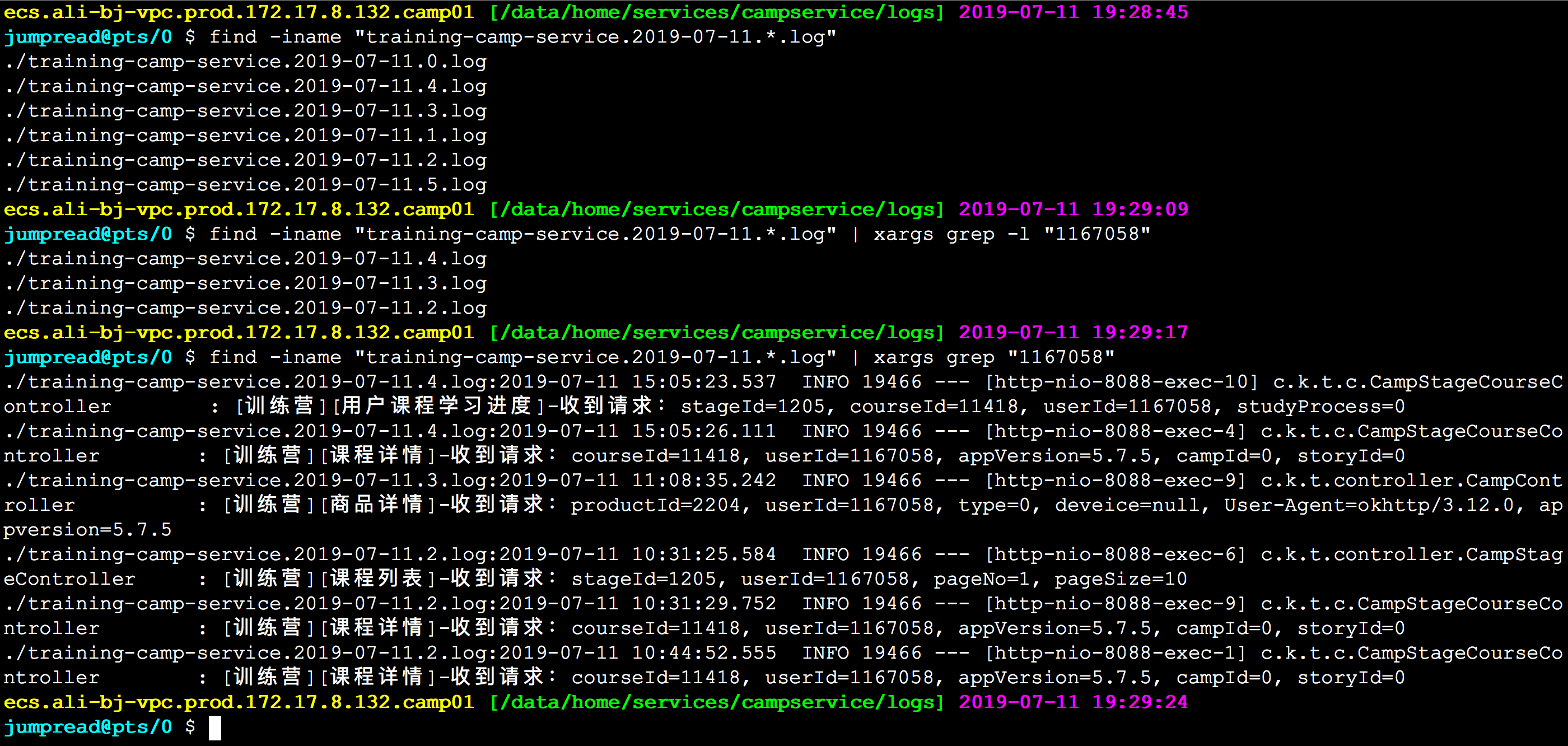

If the Documents folder didn’t exist, grep wouldn’t return any output. $ ls | grep DocumentsĪs you can see in the screenshot above, using the grep command saved us time by quickly isolating the word we searched for from the rest of the unnecessary output that the ls command produced. Let’s look in our home directory for a folder called Documents.Īnd now, let’s try checking the directory again, but this time using grep to check specifically for the Documents folder. That’s something you would use the “ls” command for.īut, to make this whole process of checking the directory’s contents even faster, you can pipe the output of the ls command to the grep command. Say that you need to check the contents of a directory to see if a certain file exists there. Let’s look at some really common examples. You can use it to search a file for a certain word or combination of words, or you can pipe the output of other Linux commands to grep, so grep can show you only the output that you need to see. Whatever method your situation requires, no one should be surprised that Linux provides enough options that you can find the one that suits your particular needs.Grep is a command-line tool that Linux users use to search for strings of text. And if you’re invoking a new shell each time to launch the command, that overhead gets worse.īut sometimes-depending on what you’re trying to achieve-you may not have another option. There’s a CPU load and time penalty for repeatedly calling a command when you could call it once and pass all the filenames to it in one go.

#Find word in file unix command archive#

We can use ls to see the archive file that is created for us. The tar utility will create an archive file called “page_.” tar -cvzf page_: This is the command xargs is going to feed the file list from find to.xargs -o: The -0 arguments xargs to not treat whitespace as the end of a filename.This means that that filenames with spaces in them will be processed correctly. Directories will not be listed because we’re specifically telling it to look for files only, with -type f. The print0 argument tells find to not treat whitespace as the end of a filename. name “*.page” -type f -print0: The find action will start in the current directory, searching by name for files that match the “*.page” search string. The command is made up of different elements. name "*.page" -type f -print0 | xargs -0 tar -cvzf page_ We’ll run this command in a directory that has many help system PAGE files in it. This is a long-winded way to go about it, but we could feed the files found by find into xargs, which then pipes them into tar to create an archive file of those files. We can use find with xargs to some action performed on the files that are found. That’s “almost the same” thing, and not “exactly the same” thing because there can be unexpected differences with shell expansions and file name globbing. This achieves almost the same thing as straightforward piping. To address this shortcoming the xargs command can be used to parcel up piped input and to feed it into other commands as though they were command-line parameters to that command.

- Blog

- Nisha sarang salary

- Hp wifi driver windows 7

- Online word to pdf converter fast

- Hello neighbor online free cool games

- How to uninstall office 365 on mac

- Lexmark x422 camera driver indir

- Respondus lockdown browser free download uta

- Mac address learning cisco

- Microsoft office excel 2013 images

- How to add crop marks in word 2013

- Find word in file unix command

- Developer tab excel for mac 2016

- How to insert a citation in powerpoint 2013

- Ios data recovery for mac

- Directory list and print patch